2022-11-01

Dataset Overview, Underperforming Slices, Scenario Tests, Model Comparison and Faster Model Commit

Highlights

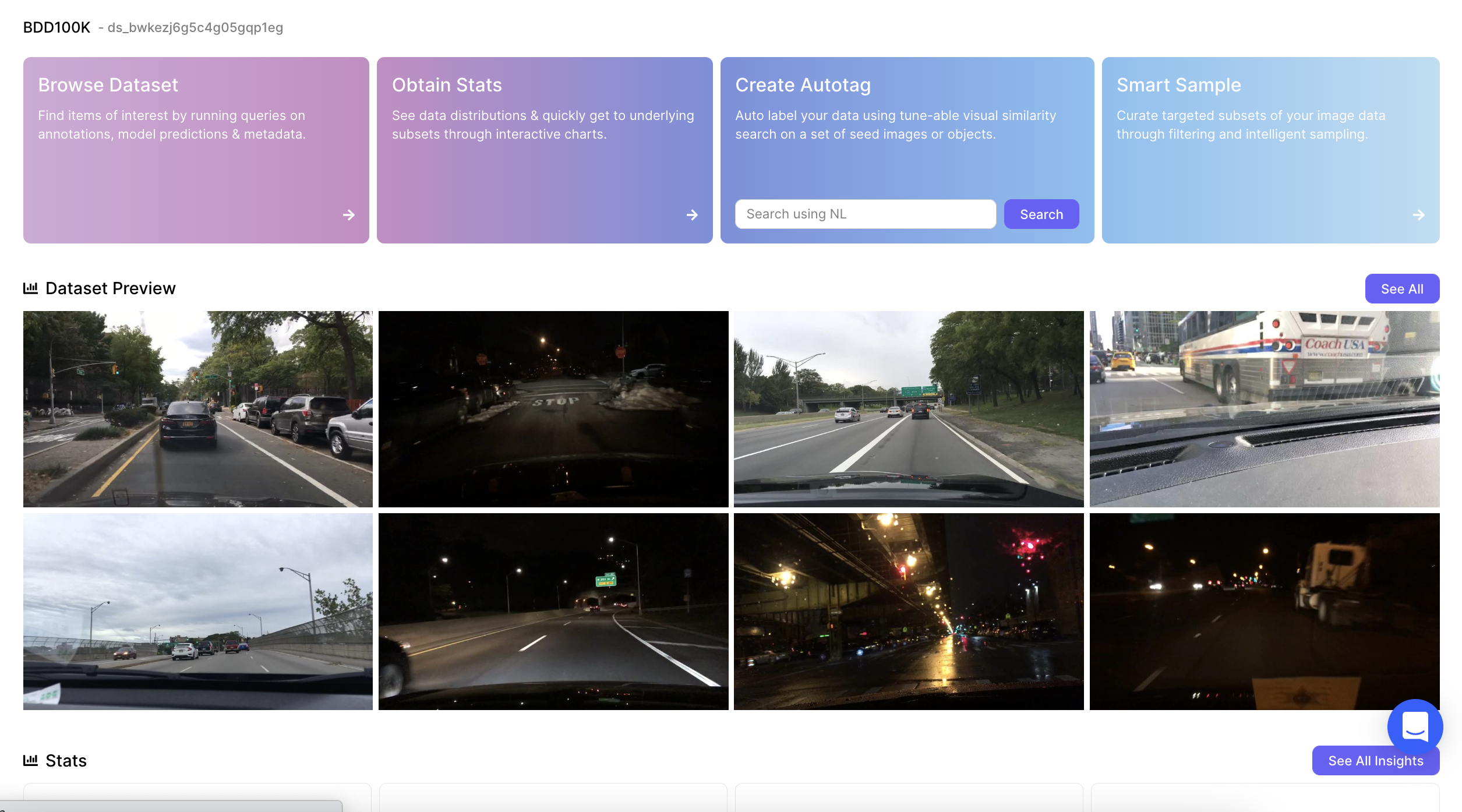

📊Dataset Overview

Clicking on a dataset card from the Nucleus homepage now opens the dataset overview. This page provides a digestible summary of the dataset including item previews, distribution charts, associated models and slices. In addition, it also provides easy access to all core features e.g. autotag and smart sample. This is useful to understand the dataset before diving deeper.

See dataset overview for BDD100K →

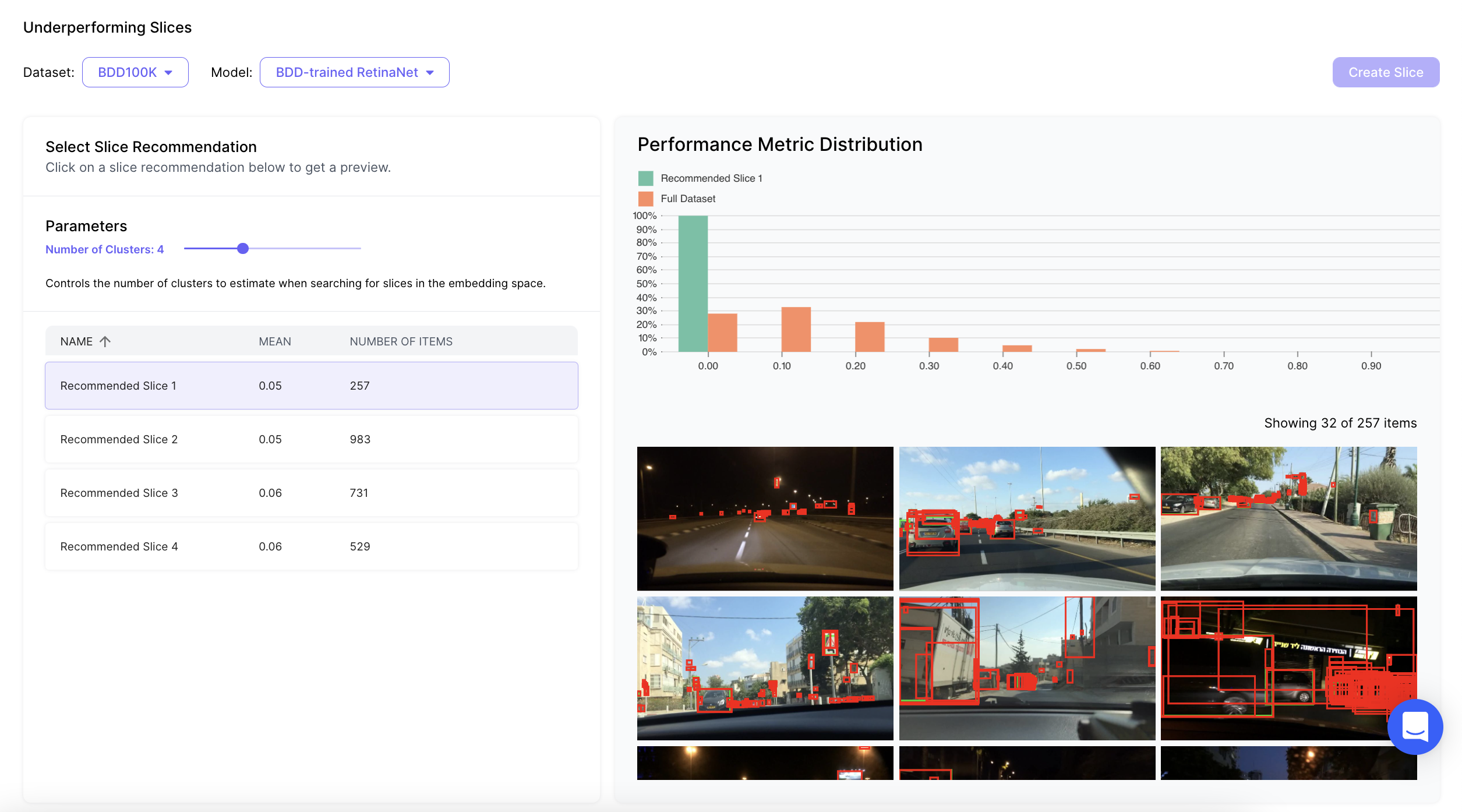

🔎 Underperforming Slices

Nucleus now automatically surfaces underperforming data slices based on model predictions. To obtain these slices users only need to select a dataset, a model and set the number of clusters. Using these inputs Nucleus produces semantically coherent slices where the model performs lower than average. This is useful to understand scenarios where the model can be improved.

Obtain underperforming slices on a public dataset →

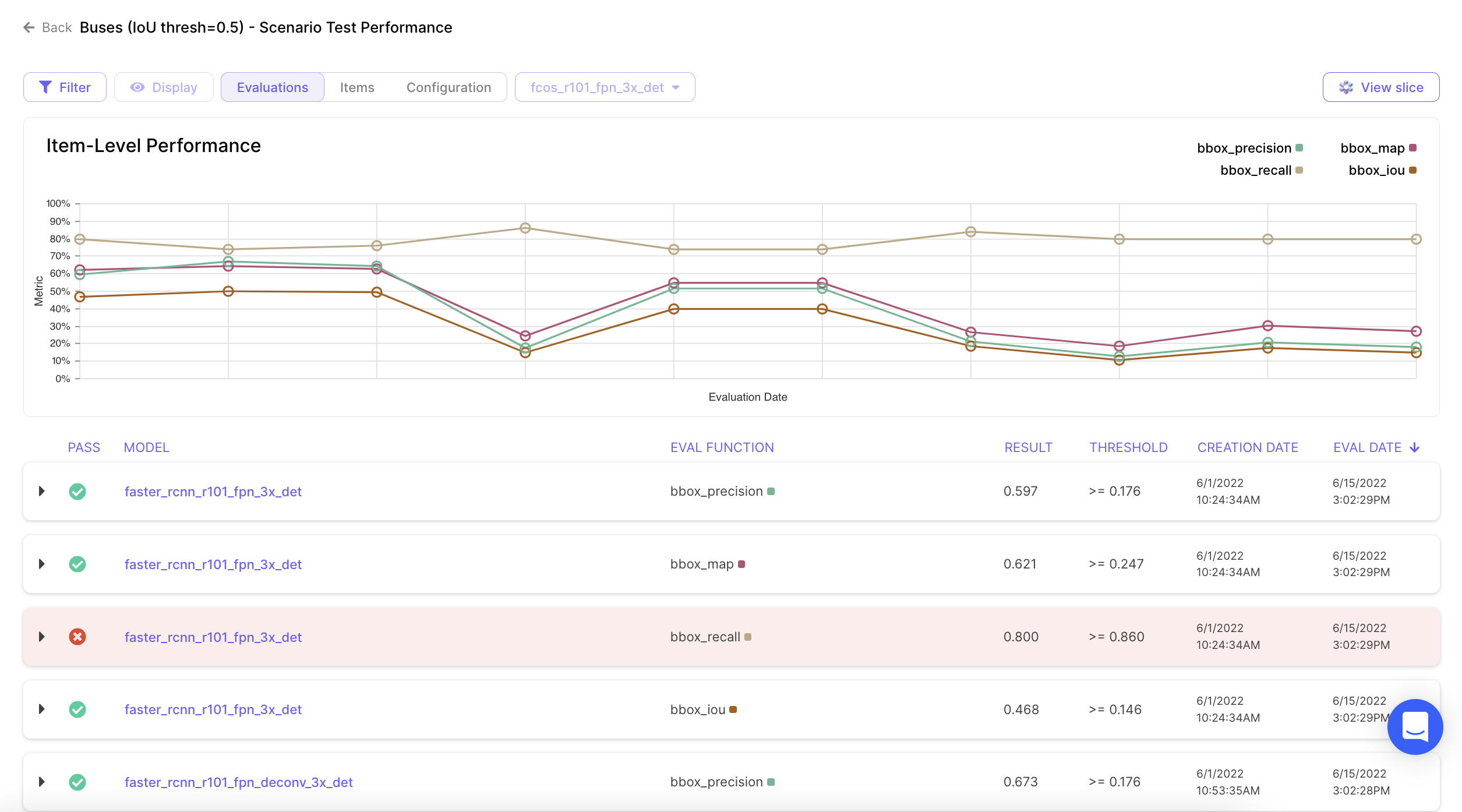

✅ Scenario Tests

Scenario tests for models are the equivalent of unit tests for code. These tests allow users to obtain and track model performance over a metric of choice e.g. mAP. Scenario tests can be easily set up using a Nucleus slice and out of box metrics. This is particularly useful for avoiding regressions on critical scenarios. Currently bbox, semseg, polygon and cuboid predictions are supported.

Explore a public scenario test →

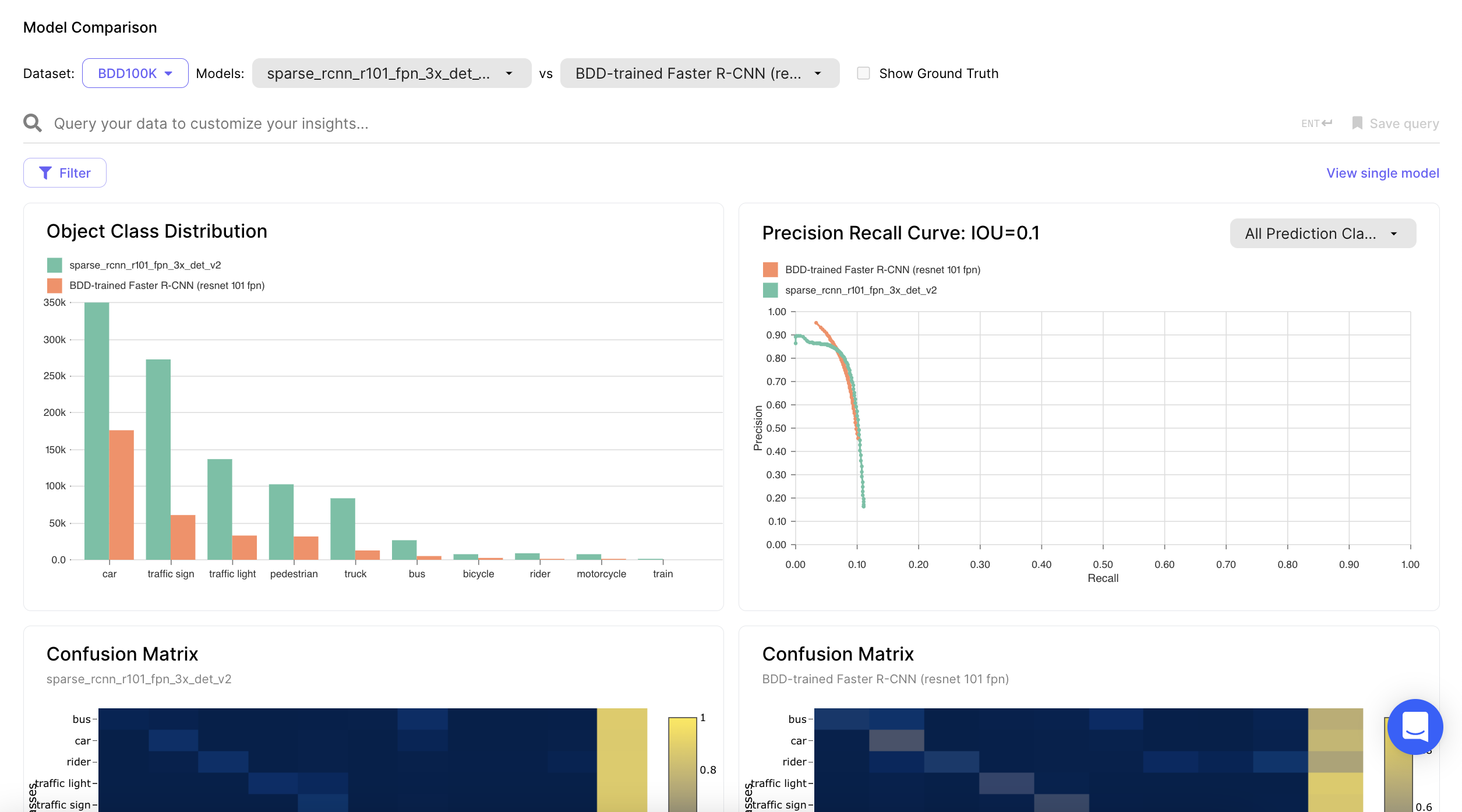

🆚 Model Comparison

Nucleus now allows comparison of multiple models. Users can select two models and compare them using several charts including object distribution, PR curve and confusion matrix. In addition users can vary IoU and Confidence thresholds to see comparison at various operating points. This is very useful when trying to understand strengths and weaknesses of one model against the other.

Compare public models on BDD100k →

What else is new?

⚡ Faster Model Commit

We have revamped our model evaluation pipeline so you can now commit models and obtain evaluations including IOU matches and Confusions up to 100x faster! This pipeline supports bbox, polygon and cuboid predictions with semantic segmentation and categorization support coming soon.