Visual Similarity Search

Note: Image similarity search is currently enabled by default for all datasets. Similarity search on the object level, however, is currently only enabled for enterprise users. Reach out to [email protected] if you'd like to learn more.

Similarity Search

Similarity search is a very useful tool to discover similar examples of images or objects within your dataset. This is especially useful when you don't have labels within your dataset, as you can use similarity search to find interesting scenarios within your unlabeled data to send to labeling.

Similarity search is built on performing computations on embeddings, which are high dimensional vectors that store compressed information for images and objects. By default*, we compute embeddings for all images that you upload to your datasets, using an image classification model trained on Imagenet. If you would like to use and upload your own custom embeddings, you can use our custom embedding API endpoint.

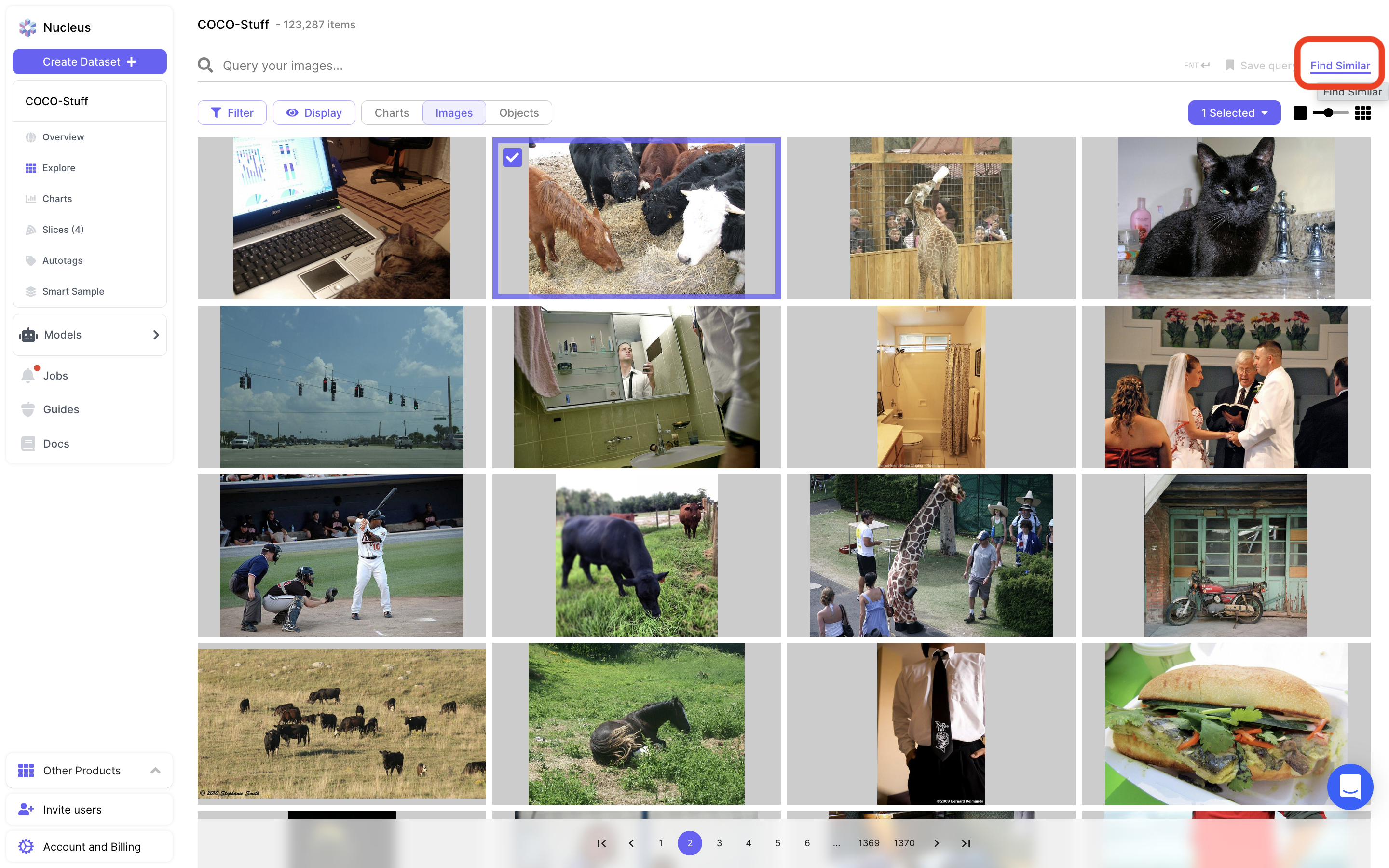

To use this feature, you need to first select some images or objects in their respective grid view. As soon as at least one item is selected, you will see the "Find Similar" button activated towards the top of the Nucleus page:

Finding similar images from the grid view dashboard.

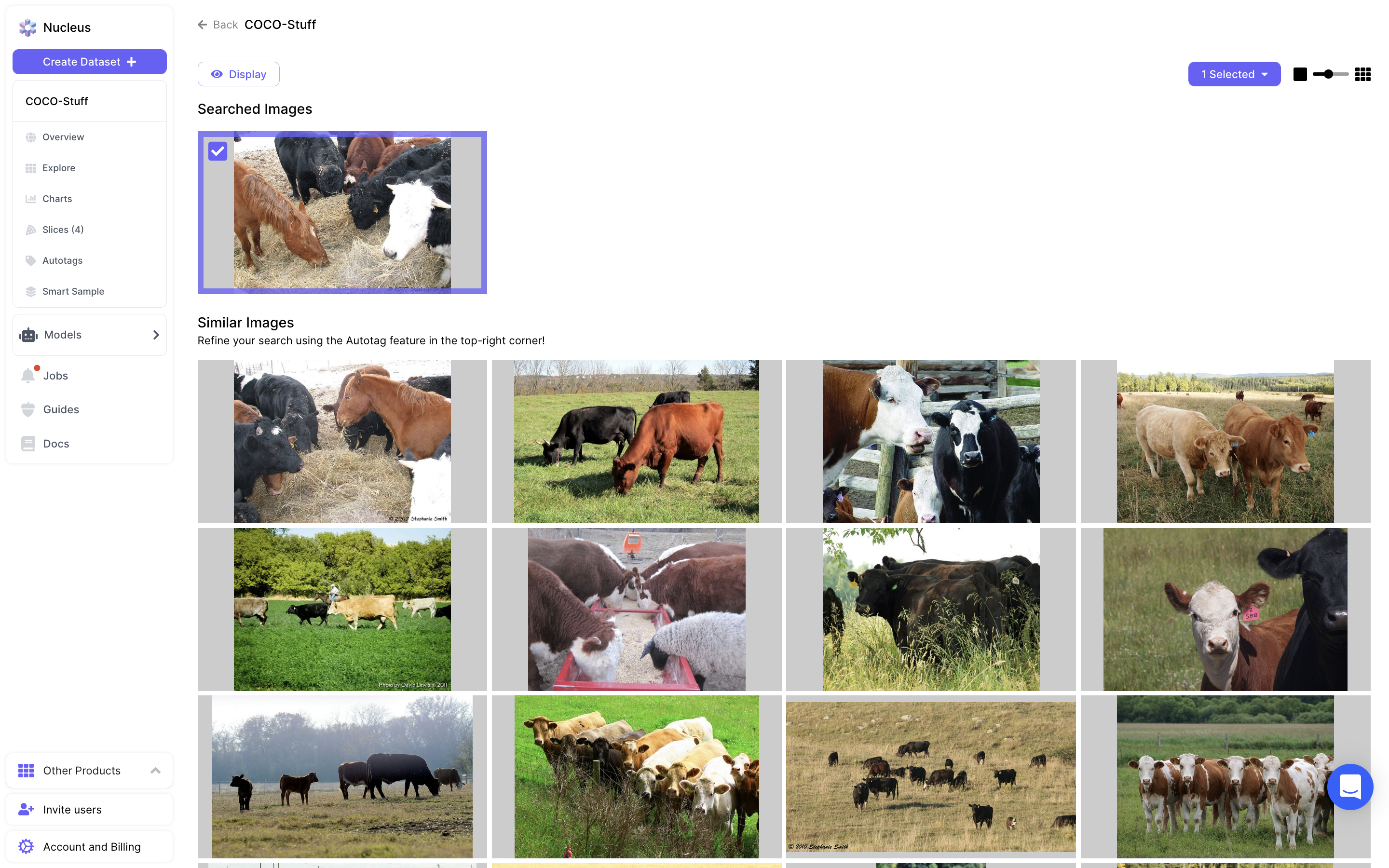

Clicking into "Find Similar" will bring up a page showing the items you selected for similarity search, and a fixed length grid of similar items that Nucleus found through similarity search:

The result of running similarity search.

If you pick multiple items for similarity search, Nucleus will return items most similar to individual items that you selected. For example, if you selected an image of a rose and an image of a cat for similarity search, the results will be images of either roses or cats.

Note that this feature is limited - Nucleus will only return a dozen or so similar results, and there is a lack of user control over the results outside of the items you select to start similarity search. For more control and a much more powerful visual similarity tool, continue reading to learn how to use Nucleus Autotag.

Updated over 3 years ago